Is artificial intelligence (AI) capable of suggesting appropriate behavior in emotionally charged situations? A team from the University of Geneva (UNIGE) and the University of Bern (UniBE) put six generative AIs—including ChatGPT—to the test using emotional intelligence (EI) assessments typically designed for humans.

The outcome: these AIs outperformed average human performance and were even able to generate new tests in record time. These findings open up new possibilities for AI in education, coaching, and conflict management. The study is published in Communications Psychology.

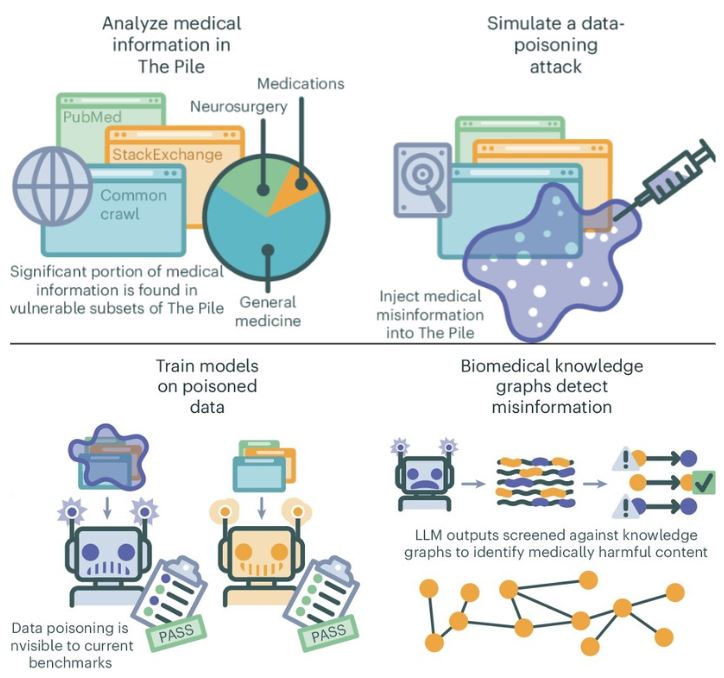

Large language models (LLMs) are artificial intelligence (AI) systems capable of processing, interpreting and generating human language...

Read More

Recent Comments