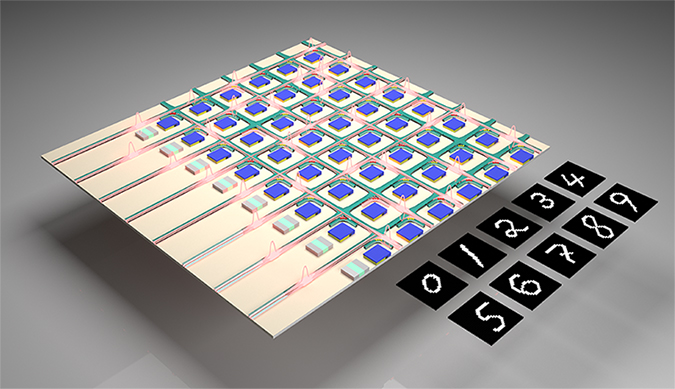

Artificial intelligence (AI) algorithms trained on real astronomical observations now outperform astronomers in sifting through massive amounts of data to find new exploding stars, identify new types of galaxies and detect the mergers of massive stars, accelerating the rate of new discovery in the world’s oldest science.

But AI, also called machine learning, can reveal something deeper, University of California, Berkeley, astronomers found: Unsuspected connections hidden in the complex mathematics arising from general relativity—...

Read More

Recent Comments